Homelab Update¶

I recently (Summer 2020) took some time to revisit, update, and document my homelab. This was also a great time to upgrade the primary hypervisor that I've been using since 2013, ~7 years ago.

Info

A few things have changed since originally writing this post. The most important notes are a change in network cards, I've switched to a 10G network card (10Gbps, not 10Gtek, the NIC manufacturer) and I've moved to a single 2TB NVMe harddrive instead of the RAID based SSD. Generally, it is not recommended to use hardware raid with Proxmox. Either a single large drive or a simple pool using ZFS is the current (2023) recommendation.

New Hypervisor Parts¶

The parts used for this build are primarily new, but I reused the case and a few case fans (more on those later) from my existing hypervisor. The goal was to put together a system that satisfies my current requirements while also

- AMD Ryzen 9 3950X 16-Core CPU

- NOCTUA NH-C14S CPU Cooler

- AsRock Rack X470D4U Motherboard

- 10Gtek Quad Gigabit Intel NIC

- 4x Corsair Vengeance LPX 32GB (Total 128GB) DIMM

- Startech SSD Raid Controller

- 2x Samsung 860 EVO 1TB SSD

- Seagate IronWolf 4TB HDD

- Thermaltake RGB 850W (Details Below)

- 2x Noctua NF-A12x15 This mix of parts brings a lot of processing power with a health amount of ram for a home system without completely breaking the bank.

Gotchas¶

This project introduced a few interesting challenges and a reminder that it is sometime best not to try to juggle too many things at one time. There were a few things I ran into during the build that were due completely to me just forgetting fairly simple things.

CPU Cooling Fit¶

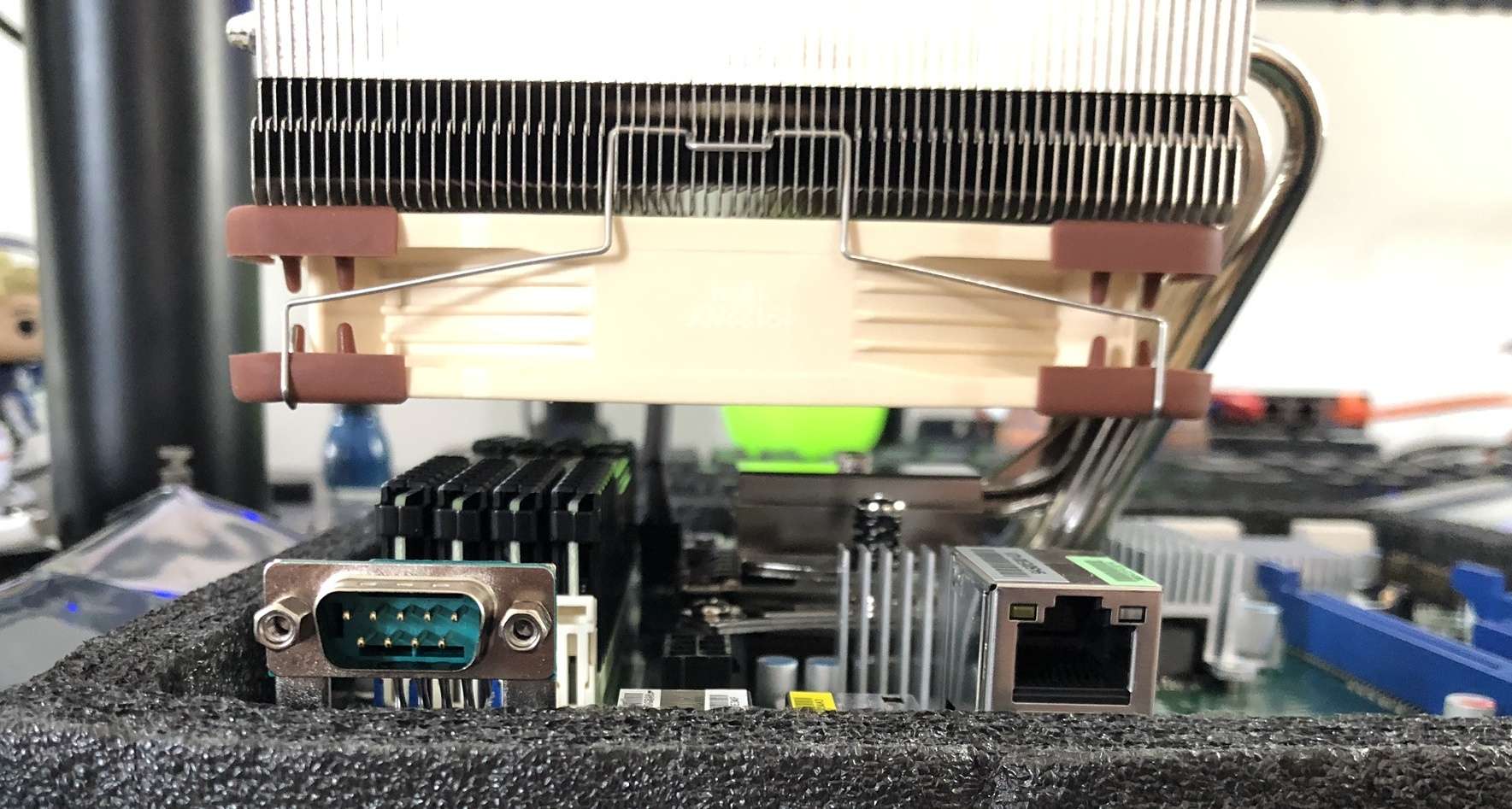

The ASRock Rack X470D4U is a "workstation" motherboard that works pretty well as a home server board. The board comes with dual gigabit nics, integrated out of band management (think Dell DRAC or HP iLO), and support for a lot of ram. I was fortunate to read Serve The Home's review of the big brother of my motherboard, the ASRock Rack X470D4U2, which let me know that one of the issues with the ASRock Rack boards is that the CPU socket is VERY close to the memory slots. Knowing this, I hoped that the Noctua NH-C14S might be a good fit. The cooler/radiator seemed like it would have enough clearance for the memory, but I wasn't sure if the cooler would also fit in the case. Luckily it did fit in the case (more on that below), but the clearance of the memory worked quite well. The NH-C14S is flexible in that the fan, pictured below in the bottom position, it can also be moved to the top of the in a pull configuration or you can put fans on both sides of the radiator, IF your case fits that kind of height.

Case Fit¶

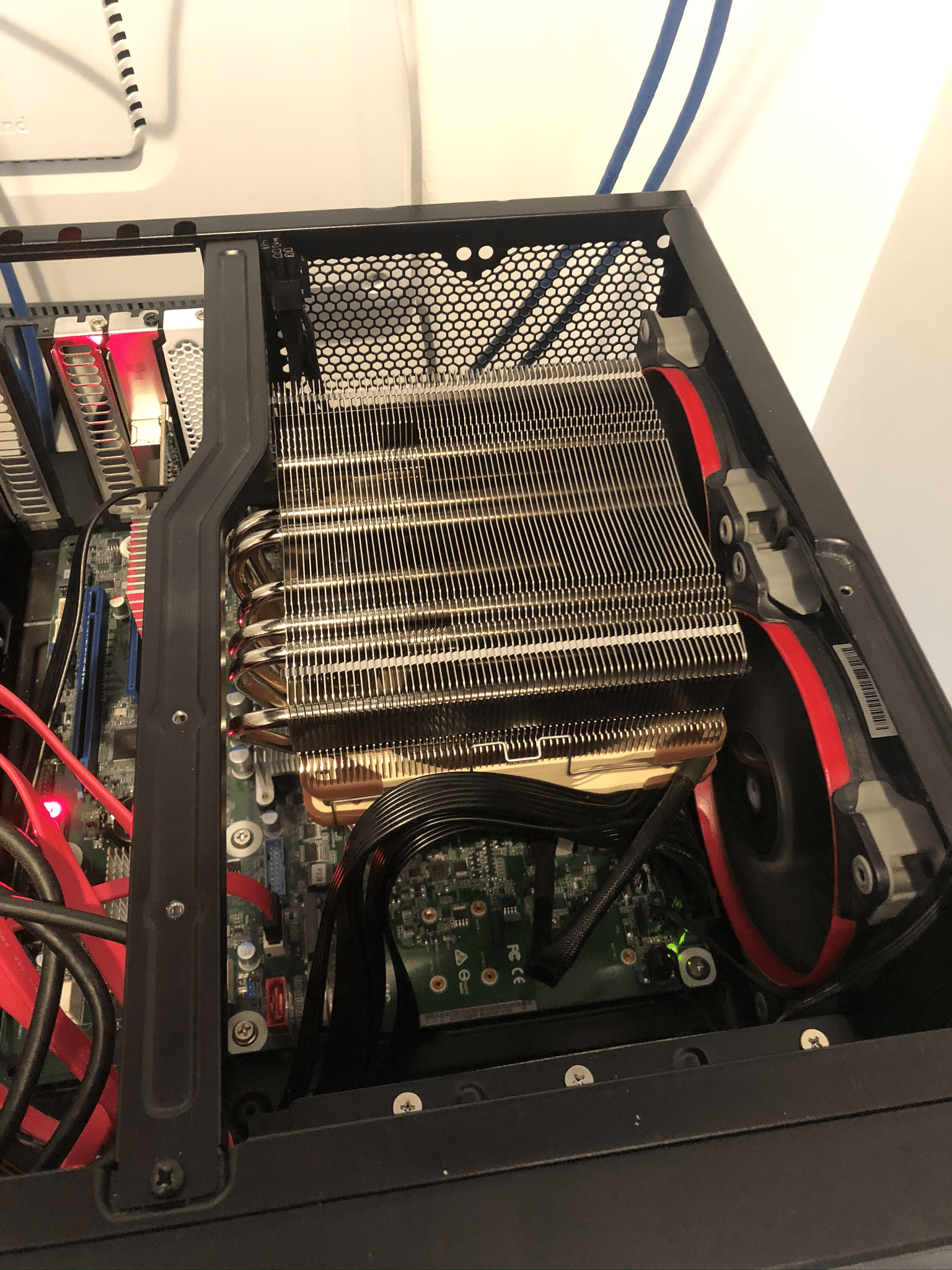

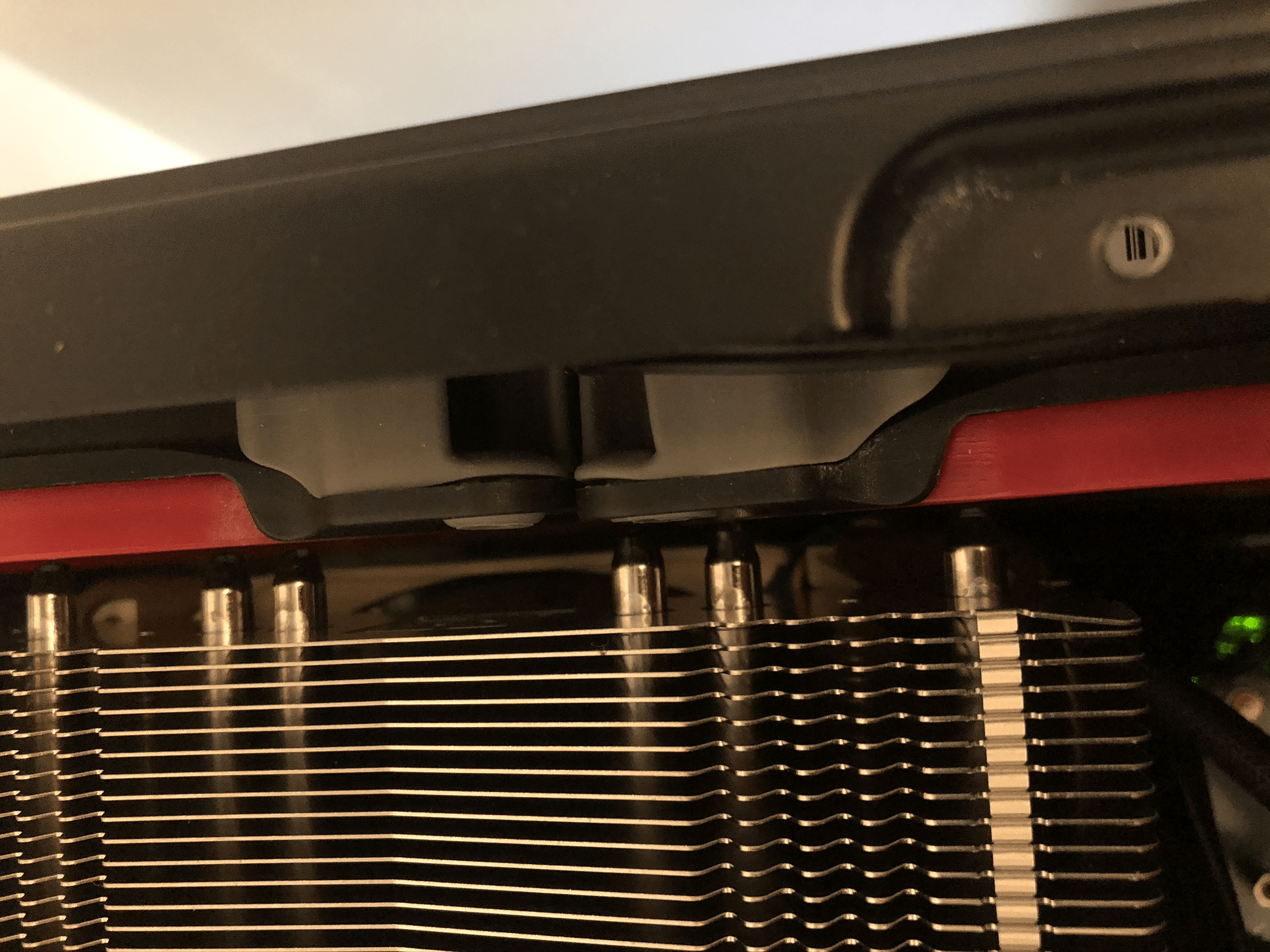

The case I've been using for my primary hypervisor has been a SilverStone GD09B, a nice HTPC case that has fit in the spots I've had my homelab in for quite some time. With this build I wanted to make sure the components would fit in the same case as it fits my current server closet. What I found, after getting the motherboard and CPU cooler installed, is that while the hardware fit in the case, it didn't fit well. I've been using two Corsair 120mm fans to provide exhaust, an important note is that these are 3 pin fans... we'll get to that, but it turns out that these full size fans pressed right up to the CPU cooler radiator. Not Fitting Well!

While it looks like a pretty tight fit, it is actually worse than it looks! The CPU cooler radiator actually has studs on the end of it which almost touch the fan blades. You can see it is a REALLY tight fit!

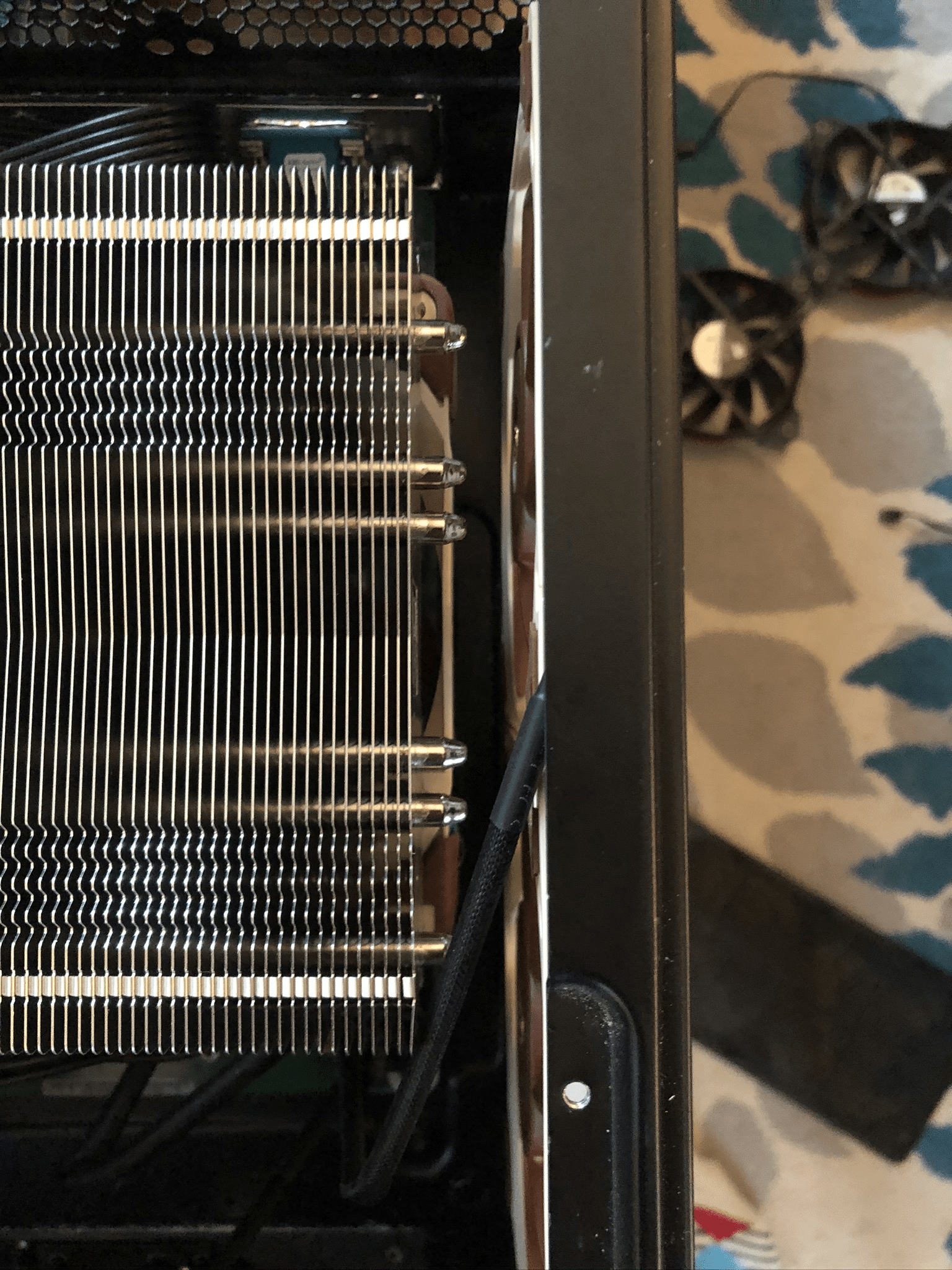

While this was a workable solution, since the fan could spin without issue, it still felt a bit weird leaving such a small amount of space. Also, as I mentioned above, these are 3 pin fans, which means they can't take advantage of the PWM functionality, the ability to modulate the speed of the fans based on the temperature in the case. This means that the fans were blowing 100% at all times. Not great for their life, especially given that they were already 7 years old. Luckily I found the Noctua NF-A12x15, the 15 meaning 15mm thick. Compared with the 25mm thickness of the Corsair fans, the Noctua fans fit MUCH better. Additionally, they are 4 pin fans which support PWM!

Motherboard Features¶

The ASRock Rack X470D4U motherboard was interesting for a few reasons. First, it comes with its own out of band management controller (BMC) so you can managed the server even if it is off. Given that this system is going to be living in a server closet without a monitor nearby makes this a very attractive feature. BMC cards are normally found only on servers or heavy duty workstation boards so being able to find an ATX board that suppored the current Ryzen chips was very helpful. The BMC card wasn't too out of date nor was the motherboard firmware and ASRock has done a pretty good job of simplifying the upgrade process.

One of the other huge benefits of a BMC, in addition to remote power and sensor capability, they almost always come with the ability to do over-the-network keyboard, video, mouse (KVM) support. This means that if you need to access your system in a way that you would normally attach a monitor, keyboard, and mouse, you can do this via your browser. There was a time that network KVM provided by BMC required some Java functionality, anything made in the last 5 years should support HTML5 out of the box.

Overall I'm happy with the motherboard features even though it is a bit of a tight fit for my case and with my CPU cooler/RAM choice. I think ASRock could have done a much better job of providing information on just how much clearance there isn't for the RAM but hopefully with a bit of research anyone who is looking to buy this motherboard will find this out for themselves before they need to return a CPU cooler that just won't fit.

VMWare and Software RAID¶

So getting all of the hardware put together wasn't too much of a pain, luckily everything fit! You might notice that while I went with duplicate 1 TB SSDs, I only have a singular HDD. For this build, I wanted to have a RAID 1 primary drive with a new, larger HDD for throw-away information. I installed the Startech RAID card, got my SSDs attached to it, booted the server and immediately realized that the X470D4U actually came with built in software RAID! Whelp, there is a $60 RAID card I didn't actually need! If you are a VMWare admin or have been around the homelab community for some time, you might realize the slight problem with that sentence. If you don't realize what the problem might be, take a look at this Google search. Oh yeah, VMWare doesn't support software RAID.

Again, luckily, I already had a RAID card! I guess it is better to have something that you don't need then not have something you do need? After re-attaching my SSDs to the RAID card and following the instructions to enable in BIOS I was good to go.

For my homelab I tend to run a primary and a secondary hypervisor setup, where I have one really good hypervisor and one not-so-good hypervisor (normally my older really good hypervisor). I've been a VMUG Advantage member for a few years, this allows me to actually run a licensed set of vSphere hypervisors with a licensed vCenter server as well! In addition to VMWare's servers a VMUG Advantage membership also gets you 365 days of a "trial" license for both their Windows and Mac virtualization products as well as a few different VMWare server products. The cost for VMUG Advantage is ~$200 per year, which may seem steep, but compared to the cost of a few of their products isn't too bad! Obviously the VMUG Advantage license is for lab use and shouldn't be use in a for-profit setting, but be sure to read the full license over at the VMUG Advantage site!

Overall I'm really happy with the build and the hardware has been performing quite well for the past few weeks. CPU temps haven't been above 41°C, which, for a current Ryzen 16 core CPU, is pretty good!